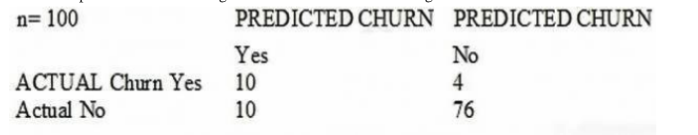

A large mobile network operating company is building a machine learning model to predict customers who are likely to unsubscribe from the service. The company plans to offer an incentive for these customers as the cost of churn is far greater than the cost of the incentive. The model produces the following confusion matrix after evaluating on a test dataset of 100 customers: mls_001_image_1.png Based on the model evaluation results, why is this a viable model for production?

Show Answer & Explanation

Correct Answer: C. The model is 86% accurate and the cost incurred by the company as a result of false positives isfiless than the false negatives.

Why Option C is right: In this specific business context, the company states that the cost of churn is far greater than the cost of the incentive. A False Negative (Actual Churn "Yes", Predicted "No") means a customer leaves, which is the highest cost. A False Positive (Actual Churn "No", Predicted "Yes") means giving an incentive to a loyal customer. While this is a small loss, it is much better than losing the customer. Since the model captures most churners and maintains high overall accuracy, it is a viable business solution.